Neuromorphic vision sensors, designed to mimic the human nervous system, have emerged as unique devices capable of responding autonomously to changes in their environment. These sensors replicate the preference of sensory neurons for detecting alterations in the sensed surroundings.

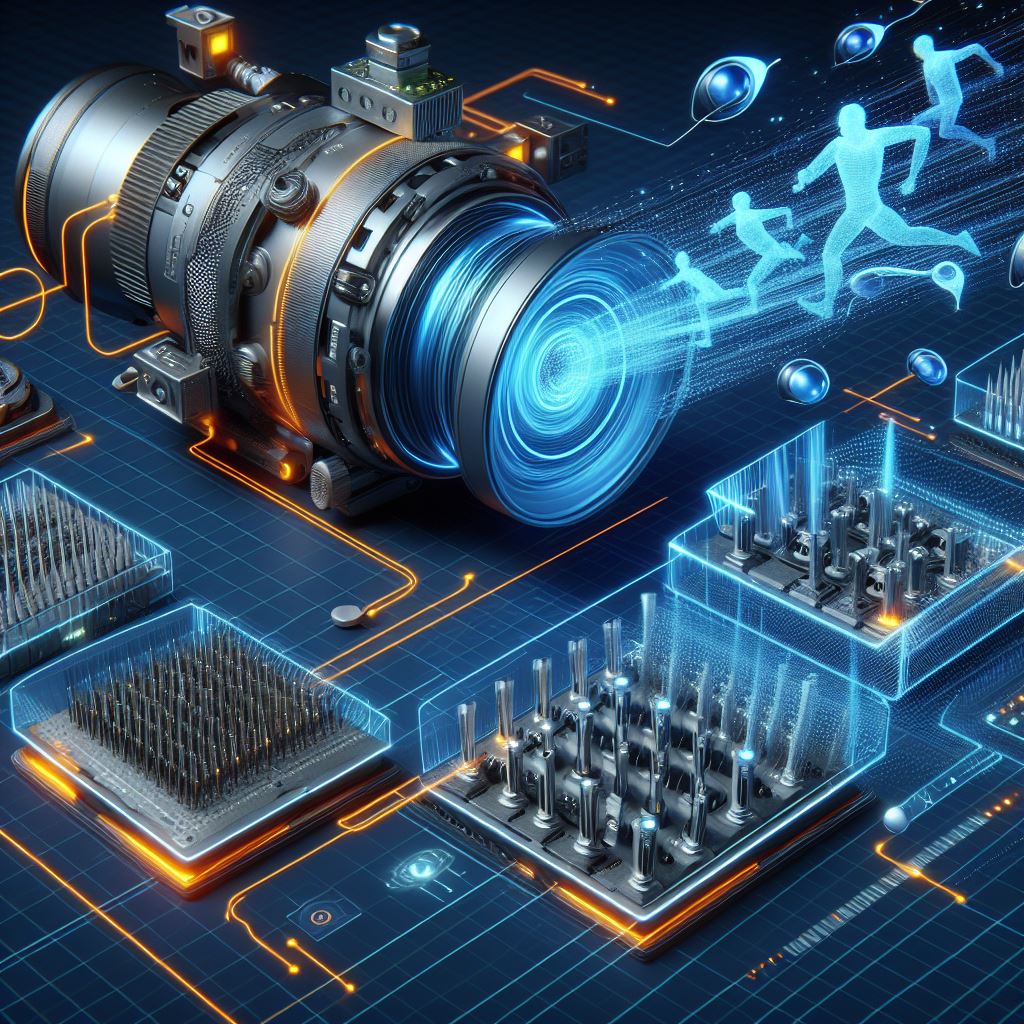

Traditionally, these sensors capture dynamic motions and transfer the data to computational units for analysis, introducing latency and increased power consumption. Addressing this challenge, researchers from Hong Kong Polytechnic University, Huazhong University of Science and Technology, and Hong Kong University of Science and Technology have introduced event-driven vision sensors that not only capture dynamic motion but also convert it into programmable spiking signals.

In a paper published in Nature Electronics, the team presents a breakthrough that eliminates the need for data transfer from sensors to computational units. This innovation enhances energy efficiency and accelerates the analysis of dynamic motions.

"Near-sensor and in-sensor computing architecture efficiently decrease data transfer latency and power consumption by directly performing computation tasks near or inside the sensory terminals," explains Yang Chai, co-author of the paper.

The research explores the combination of event-based sensors with spiking neural networks (SNNs), artificial neural networks mimicking neuron firing patterns. This novel approach aims to reduce redundant data and enhance motion recognition.

Chai elaborates, "The combination of event-based sensors and spiking neural network (SNN) for motion analysis can effectively reduce the redundant data and efficiently recognize the motion."

The newly developed computational event-driven vision sensors possess the capability of both event-based sensing and computation. These sensors generate programmable spikes in response to changes in brightness and light intensity, achieving an event-driven characteristic using branches with opposite photo responses.

Chai adds, "Our work proposes a method to sense and process the scenario by capturing local pixel-level light intensity change, thus realizing in-sensor SNN instead of conventional ANN."

Initial tests demonstrate that these sensors effectively emulate how neurons in the brain adapt to visual scene changes. Importantly, they reduce data collection and eliminate the need to transfer data to external computational units.

Looking ahead, the researchers aim to further develop and test these computational event-driven vision sensors for real-world applications. Their work may inspire advancements in sensing technologies, combining event-based sensors and SNNs, with potential applications in autonomous driving and intelligent robots.

"In the future, our group will focus on array-level realization and the integration technology of computational sensor array and CMOS circuits to demonstrate a complete in-sensor computing system," Chai outlines the next steps. "We will try to develop the benchmark to define the device metric requirements for different applications and evaluate the performance of in-sensor computing system in a quantitative way."