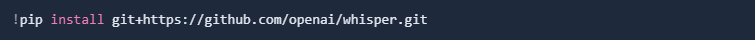

The whisper model is available on GitHub. You can download it with the following command directly in the Jupyter notebook:

Whisper needs ffmpeg installed on the current machine to work. Maybe you already have it installed, but its likely your local machine needs this program to be installed first.

OpenAI refers to multiple ways to install this package, but we will be using the Scoop package manager. Here is a tutorial how to do it manually

In the Jupyter Notebook you can install it with the following command:

After the installation a restart of is required if you are using your local machine.

Now we can continue. Next we import all necessary libraries:

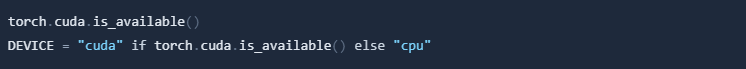

Using a GPU is the preferred way to use Whisper. If you are using a local machine, you can check if you have a GPU available. The first line results False, if Cuda compatible Nvidia GPU is not available and True if it is available. The second line of code sets the model to preference GPU whenever it is available.

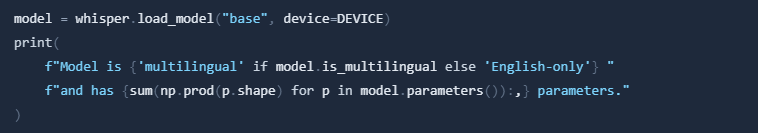

Now we can load the Whipser model. The model is loaded with the following command:

Please keep in mind, that there are multiple different models available. You can find all of them here. Each one of them has tradeoffs between accuracy and speed (compute needed). We will use the 'base' model for this tutorial.

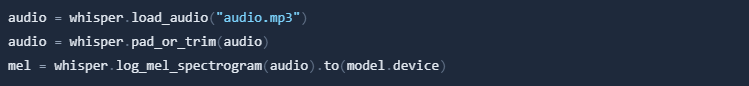

Next you need to load your audio file you want to transcribe.

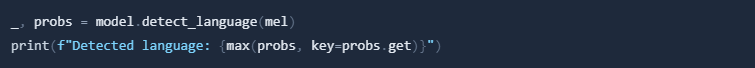

The detect_language function detects the language of your audio file:

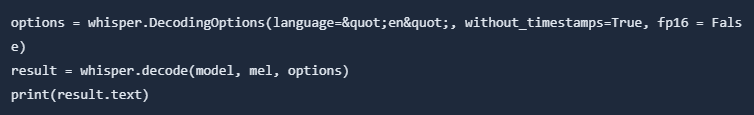

We transcribe the first 30 seconds of the audio using the DecodingOptions and the decode command. Then print out the result:

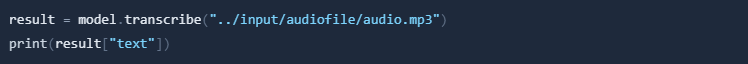

Next we can transcribe the whole audio file.

This will print out the whole audio file transcribed, after the execution has finished.